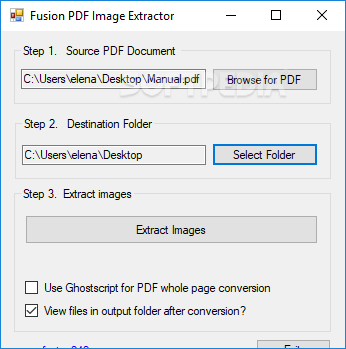

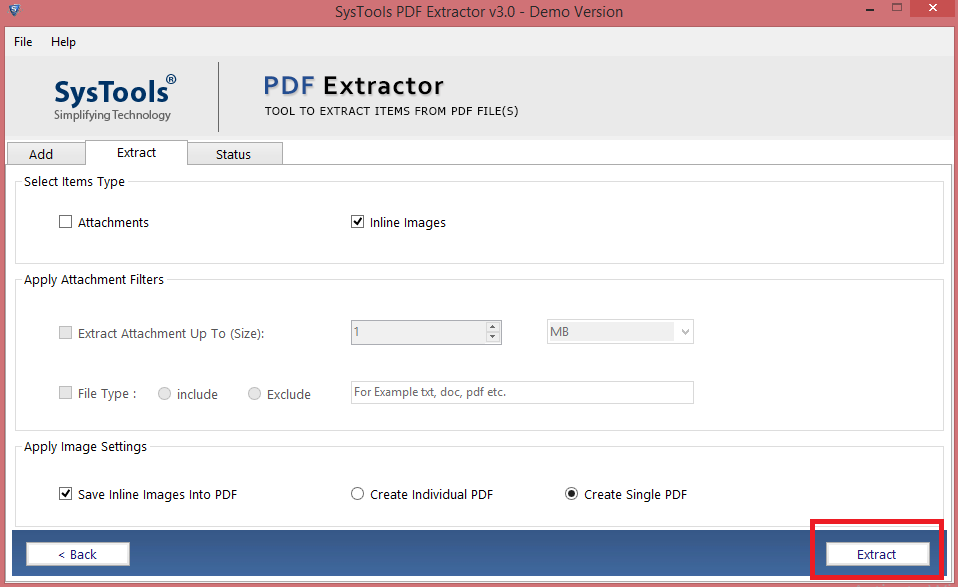

Manual validation is essential during this phase, as it helps identify any errors or inconsistencies that the extractor might overlook. To ensure high accuracy of data extraction, you can test out your extractors with sample images with different layouts. The more samples you feed to the extractor, the better the performance in accurately identifying and extracting data from PDFs. The collected samples are then utilized to train extractors according to your specific needs. These samples play a vital role in ensuring the optimal performance and high accuracy of the extraction models (extractor). The first step involves collecting samples of PDF documents that will serve as training sets for your extraction process. Here’s a step-by-step process of how an Automated PDF Extractor works: 1. It’s why we’ve developed something that does exactly that, but we’ll get there. Something that could scrape from PDFs and make information accessible would improve so many everyday business operations. If the PDFs are image-based, extracting data is even more complicated and would require OCR incorporated into the software. The software would also need to be easily integrated with whatever is meant to be processing and analyzing the data so as not to bring the whole workflow to a grinding halt. Any software tasked with extracting data from them would need to understand the context of the document and then locate the exact data fields. What makes PDF data scraping difficult is what always makes PDFs tricky: they come in a range of layouts and formats. The next step is then ideally building software that could extract the data from the PDFs and enter it into a data processing program. If a business is receiving hundreds or even thousands of PDFs a day, it’s also by no means an efficient or sustainable way to extract data. Errors are a far greater risk, which may go on to cost a business unnecessary money and time. Whether it’s performed internally or outsourced, it can be time-consuming and costly.

Manual data entry comes with its own issues though. They tend to lack both these things, which is why many businesses have resorted to simply extracting data from PDFs manually. To be processed directly by data software and understood programmatically, PDFs would need some kind of markup or hierarchy of data. What Makes Data Scraping from PDFs so Difficult? A PDF on its own is just a flat document for humans to read but PDF scraping ensures that the data on it can become multi-dimensional in use. The information in those documents is valuable but can only be processed by software if it’s extracted and placed into structured formats. PDFs are used to exchange all manner of business documents such as bank statements, invoices, and receipts. Well, not unless the data is extracted first. The challenge that this creates, however, is that the information they contain cannot be processed by software for further analysis. Unstructured data accounts for about 80% to 90% of data generated and collected by businesses. Businesses have to extract data from PDFs in the first place because of two things: the format of a PDF and the value of data.Īs mentioned, PDFs are an unstructured form of data. If you’ve never heard of the term before, PDF scraping simply refers to the act of “scraping” or extracting data from PDFs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed